Your new post is loading...

Your new post is loading...

|

Scooped by

nrip

|

Digital health tools, platforms, and artificial intelligence– or machine learning–based clinical decision support systems are increasingly part of health delivery approaches, with an ever-greater degree of system interaction. Critical to the successful deployment of these tools is their functional integration into existing clinical routines and workflows. This depends on system interoperability and on intuitive and safe user interface design. Its extremely important that research and efforts are directed towards minimizing emergent workflow stress and ensuring purposeful design for integration. Usability of tools in practice is as important as algorithm quality. Regulatory and health technology assessment frameworks recognize the importance of these factors to a certain extent, but their focus remains mainly on the individual product rather than on emergent system and workflow effects. The measurement of performance and user experience has so far been performed in ad hoc, nonstandardized ways by individual actors using their own evaluation approaches. This paper proposes that a standard framework for system-level and holistic evaluation which be built into interacting digital systems to enable systematic and standardized system-wide, multiproduct, postmarket surveillance and technology assessment. Such a system could be made available to developers through regulatory or assessment bodies as an application programming interface and could be a requirement for digital tool certification, just as interoperability is. This would enable health systems and tool developers to collect system-level data directly from real device use cases, enabling the controlled and safe delivery of systematic quality assessment or improvement studies suitable for the complexity and interconnectedness of clinical workflows using developing digital health technologies. read the entire paper at https://www.jmir.org/2023/1/e50158

|

Scooped by

nrip

|

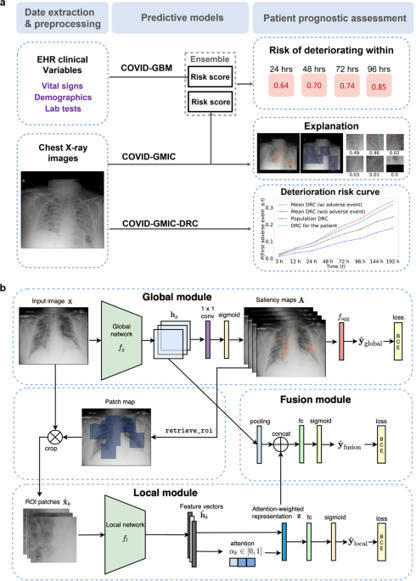

During the coronavirus disease 2019 (COVID-19) pandemic, rapid and accurate triage of patients at the emergency department is critical to inform decision-making. We propose a data-driven approach for automatic prediction of deterioration risk using a deep neural network that learns from chest X-ray images and a gradient boosting model that learns from routine clinical variables. Our AI prognosis system, trained using data from 3661 patients, achieves an area under the receiver operating characteristic curve (AUC) of 0.786 (95% CI: 0.745–0.830) when predicting deterioration within 96 hours. The deep neural network extracts informative areas of chest X-ray images to assist clinicians in interpreting the predictions and performs comparably to two radiologists in a reader study. In order to verify performance in a real clinical setting, we silently deployed a preliminary version of the deep neural network at New York University Langone Health during the first wave of the pandemic, which produced accurate predictions in real-time. In summary, our findings demonstrate the potential of the proposed system for assisting front-line physicians in the triage of COVID-19 patients. read the open article at https://www.nature.com/articles/s41746-021-00453-0

|

Scooped by

nrip

|

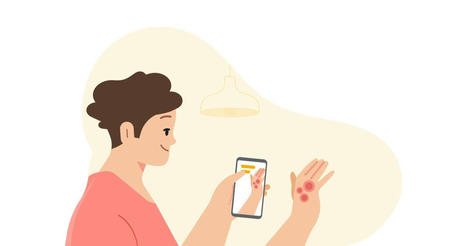

Google's AI-powered tool that will be available later this year helps anyone identify skin conditions using their phone’s camera. Artificial intelligence (AI) has the potential to help clinicians care for patients and treat disease — from improving the screening process for breast cancer to helping detect tuberculosis more efficiently. When we combine these advances in AI with other technologies, like smartphone cameras, we can unlock new ways for people to stay better informed about their health, too. Google's AI-powered dermatology assist tool is a web-based application that they hope to launch as a pilot later this year, to make it easier to figure out what might be going on with their skin. Once the user launchs the tool, simply use their phone’s camera to take three images of the skin, hair or nail concern from different angles. They are then asked questions about their skin type, how long they’ve had the issue and other symptoms that help the tool narrow down the possibilities. The AI model analyzes this information and draws from its knowledge of 288 conditions to give the user a list of possible matching conditions that they can then research further. For each matching condition, the tool will show dermatologist-reviewed information and answers to commonly asked questions, along with similar matching images from the web. The tool is not intended to provide a diagnosis nor be a substitute for medical advice as many conditions require clinician review, in-person examination, or additional testing like a biopsy. Rather Google hopes it gives users access to authoritative information so they can make a more informed decision about their next step. Developing an AI model that assesses issues for all skin types Google's tool is the culmination of over three years of machine learning research and product development. To date, Google has published several peer-reviewed papers that validate their AI model and they claim more are in the works. Recently, the AI model that powers the tool successfully passed clinical validation, and the tool has been CE marked as a Class I medical device in the EU. more at https://blog.google/technology/health/ai-dermatology-preview-io-2021/

|

Scooped by

nrip

|

Researchers at Duke University are developing an artificial intelligence tool for toilets that would help providers improve care management for patients with gastrointestinal issues through remote patient monitoring. The tool, which can be installed in the pipes of a toilet and analyzes stool samples, has the potential to improve treatment of chronic gastrointestinal issues like inflammatory bowel disease or irritable bowel syndrome, according to a press release. When a patient flushes the toilet, the mHealth platform photographs the stool as it moves through the pipes. That data is sent to a gastroenterologist, who can analyze the data for evidence of chronic issues. A study conducted by Duke University researchers found that the platform had an 85.1 percent accuracy rate on stool form classification and a 76.3 percent accuracy rate on detection of gross blood. read the entire article at https://mhealthintelligence.com/news/ai-toilet-tool-offers-remote-patient-monitoring-for-gastrointestinal-health

|

Scooped by

nrip

|

As the use of artificial intelligence (AI) in health applications grows, health providers are looking for ways to improve patients' experience with their machine doctors. Researchers from Penn State and University of California, Santa Barbara (UCSB) found that people may be less likely to take health advice from an AI doctor when the robot knows their name and medical history. On the other hand, patients want to be on a first-name basis with their human doctors. When the AI doctor used the first name of the patients and referred to their medical history in the conversation, study participants were more likely to consider an AI health chatbot intrusive and also less likely to heed the AI's medical advice, the researchers added. However, they expected human doctors to differentiate them from other patients and were less likely to comply when a human doctor failed to remember their information. The findings offer further evidence that machines walk a fine line in serving as doctors. Machines do have advantages as medical providers, said Joseph B. Walther, distinguished professor in communication and the Mark and Susan Bertelsen Presidential Chair in Technology and Society at UCSB. He said that, like a family doctor who has treated a patient for a long time, computer systems could — hypothetically — know a patient’s complete medical history. In comparison, seeing a new doctor or a specialist who knows only your latest lab tests might be a more common experience, said Walther, who is also director of the Center for Information Technology and Society at UCSB. “This struck us with the question: ‘Who really knows us better: a machine that can store all this information, or a human who has never met us before or hasn’t developed a relationship with us, and what do we value in a relationship with a medical expert?’” said Walther. “So this research asks, who knows us better — and who do we like more?” Accepting AI doctors As medical providers look for cost-effective ways to provide better care, AI medical services may provide one alternative. However, AI doctors must provide care and advice that patients are willing to accept, according to Cheng Chen, doctoral student in mass communications at Penn State. “One of the reasons we conducted this study was that we read in the literature a lot of accounts of how people are reluctant to accept AI as a doctor,” said Chen. “They just don’t feel comfortable with the technology and they don’t feel that the AI recognizes their uniqueness as a patient. So, we thought that because machines can retain so much information about a person, they can provide individuation, and solve this uniqueness problem.” The findings suggest that this strategy can backfire. “When an AI system recognizes a person’s uniqueness, it comes across as intrusive, echoing larger concerns with AI in society,” said Sundar. In the future, the researchers expect more investigations into the roles that authenticity and the ability for machines to engage in back-and-forth questions may play in developing better rapport with patients. read more at https://news.psu.edu/story/657391/2021/05/10/research/patients-may-not-take-advice-ai-doctors-who-know-their-names

|

Scooped by

nrip

|

Numerous studies demonstrate frequent mutations in the genome of SARS-CoV-2. Our goal was to statistically link mutations to severe disease outcome. We found that automated machine learning, such as the method of Tsamardinos and coworkers used here, is a versatile and effective tool to find salient features in large and noisy databases, such as the fast growing collection of SARS-CoV-2 genomes. In this work we used machine learning techniques to select mutation signatures associated with severe SARS-CoV-2 infections. We grouped patients into 2 major categories (“mild” and “severe”) by grouping the 179 outcome designations in the GISAID database. A protocol combined of logistic regression and feature selection algorithms revealed that mutation signatures of about twenty mutations can be used to separate the two groups. The mutation signature is in good agreement with the variants well known from previous genome sequencing studies, including Spike protein variants V1176F and S477N that co-occur with DG14G mutations and account for a large proportion of fast spreading SARS-CoV-2 variants. UTR mutations were also selected as part of the best mutation signatures. The mutations identified here are also part of previous, statistically derived mutation profiles. An online prediction platform was set up that can assign a probabilistic measure of infection severity to SARS-CoV-2 sequences, including a qualitative index of the strength of the diagnosis. The data confirm that machine learning methods can be conveniently used to select genomic mutations associated with disease severity, but one has to be cautious that such statistical associations – like common sequence signatures, or marker fingerprints in general – are by no means causal relations, unless confirmed by experiments. Our plans are to update the predictions server in regular time intervals. While this project was underway more than 100 thousand sequences were deposited in public databases, and importantly, new variants emerged in the UK and in South Africa that are not yet included in the current datasets. Also, in addition to mutations, we plan to include also insertions and deletions which will hopefully further improve the predictive power of the server. The study was funded by the Hungarian Ministry for Innovation and Technology (MIT) , within the framework of the Bionic thematic programme of the Semmelweis University. Read the entire study at https://www.biorxiv.org/content/10.1101/2021.04.01.438063v1.full Access the online portal mentioned above at https://covidoutcome.com/

|

Scooped by

nrip

|

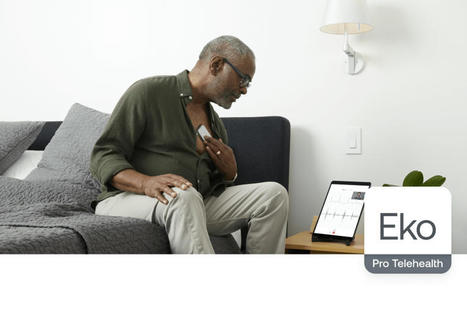

Eko, a cardiopulmonary digital health company, today announced the peer-reviewed publication of a clinical study that found that the Eko artificial intelligence (AI) algorithm for detecting heart murmurs is accurate and reliable, with comparable performance to that of an expert cardiologist. These findings suggest utility of the FDA-cleared Eko AI algorithm as a front line clinical tool to aid clinicians in screening for cardiac murmurs that may be caused by valvular heart disease. For moderate-to-severe aortic stenosis, the algorithm was found to have sensitivity of 93.2% and specificity of 86.0%. The algorithm significantly outperformed general practitioners listening for moderate-to-severe valvular heart disease, as a 2018 study showed general practitioners had sensitivity of 44% and specificity of 69%.

|

Scooped by

nrip

|

The Mayo Clinic has launched a new mHealth platform aimed at helping healthcare providers improve their use of connected health devices in remote patient monitoring and other mobile health programs. The Remote Diagnostic and Management Platform (RDMP) connects devices to AI resources that would help providers with clinical decisions support and diagnoses in what the Minnesota-based health system calls “event-driven medicine.” It’s designed to help providers in and outside the health system analyze and act on data collected by mHealth devices. “The dramatically increased use of remote patient telemetry devices coupled with the rapidly accelerating development of AI and machine learning algorithms has the potential to revolutionize diagnostic medicine,With RDMP, clinicians will have access to best-in-class algorithms and care protocols and will be able to serve more patients effectively in remote care settings. The platform will also enable patients to take more control of their health and make better decisions based on insights delivered directly to them.” read more at https://mhealthintelligence.com/news/mayo-clinic-launches-new-platform-for-analyzing-data-from-mhealth-devices

|

Scooped by

nrip

|

Imagine you’re a scientist who needs to discover a new antibiotic to fight off a scary disease. How would you go about finding it? Typically, you’d have to test lots and lots of different molecules in the lab until you find one that has the necessary bacteria-killing properties. You might find some contenders that are good at killing the bacteria only to realize that you can’t use them because they also prove toxic to humans. It’s a very long, very expensive, and probably very aggravating process. But what if, instead, you could just type into your computer the properties you’re looking for and have your computer design the perfect molecule for you? That’s the general approach IBM researchers are taking, using an AI system that can automatically generate the design of molecules for new antibiotics. In a new paper, published in Nature Biomedical Engineering, the researchers detail how they’ve already used it to quickly design two new antimicrobial peptides — small molecules that can kill bacteria — that are effective against a bunch of different pathogens in mice. Normally, this molecule discovery process would take scientists years. The AI system did it in a matter of days. That’s great news, because we urgently need faster ways to create new antibiotics. How IBM’s AI system works IBM’s new AI system relies on something called a generative model. To understand it at its simplest level, we can break it down into three basic steps. First, the researchers start with a massive database of known peptide molecules. Then the AI pulls information from the database and analyzes the patterns to figure out the relationship between molecules and their properties. It might find that when a molecule has a certain structure or composition, it tends to perform a certain function. This allows it to “learn” the basic rules of molecule design. Finally, researchers can tell the AI exactly what properties they want a new molecule to have. They can also input constraints (for example: low toxicity, please!). Using this info on desirable and undesirable traits, the AI then designs new molecules that satisfy the parameters. The researchers can pick the best one from among them and start testing on mice in a lab. The IBM researchers claim that their approach outperformed other leading methods for designing new antimicrobial peptides by 10 percent. They found that they were able to design two new antimicrobial peptides that are highly potent against diverse pathogens, including multidrug-resistant K. pneumoniae, a bacterium known for causing infections in hospital patients. Happily, the peptides had low toxicity when tested in mice, an important signal about their safety (though not everything that’s true for mice ends up being generalizable to humans). read the original unedited article at https://www.vox.com/future-perfect/22360573/ai-ibm-design-new-antibiotics-covid-19-treatments read the paper by the IBM researchers - Accelerated antimicrobial discovery via deep generative models and molecular dynamics simulations

|

Scooped by

nrip

|

Artificial intelligence(AI) is slowly demonstrating its ability to improve healthcare. Typical examples are - Predicting health outcomes

- Improving workflow inefficiencies

- Assisting in Triaging

However, questions remain about how to ensure these technologies and tools are developed, implemented and maintained responsibly A NAM report published in JAMA Viewpoint column, “Artificial Intelligence in Health Care: A Report From the National Academy of Medicine.” recommends that people developing, using, implementing and regulating health care AI do seven key things. Promote population-representative data with accessibility, standardization and quality is imperative. [to ensure accuracy for all populations] Prioritize ethical, equitable and inclusive medical AI while addressing explicit and implicit bias. [to understand the potential of the Underlying biases to worsen or address existing inequity ] Contextualize the dialogue of transparency and trust, which means accepting differential needs. [to clarify the level of transparency needed across a AI developers, implementation teams, users and regulators] Focus in the near term on augmented intelligence rather than AI autonomous agents. [supporting data synthesis, interpretation and decision-making by clinicians and patients is where opportunities are now] Develop and deploy appropriate training and educational programs. [Training programs must be multidisciplinary and should engage AI developers, implementers, health care system leadership, frontline clinical teams, ethicists, humanists, patients and caregivers] Leverage frameworks and best practices for learning health care systems, human factors and implementation science. [Have a robust and mature IT governance strategy in place before Health delivery systems use AI formally] Balance innovation with safety through regulation and legislation to promote trust. [evaluate deployed clinical AI for effectiveness and safety based on clinical data.] read more at https://www.ama-assn.org/practice-management/digital/7-tips-responsible-use-health-care-ai

|

|

Scooped by

nrip

|

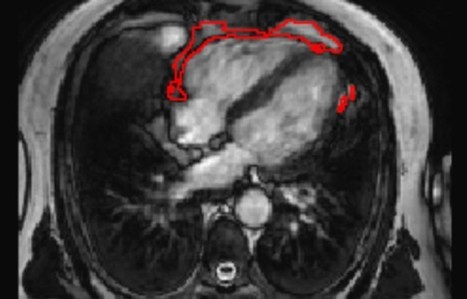

The distribution of fat in the body can influence a person's risk of developing various diseases. The commonly used measure of body mass index (BMI) mostly reflects fat accumulation under the skin, rather than around the internal organs. In particular, there are suggestions that fat accumulation around the heart may be a predictor of heart disease, and has been linked to a range of conditions, including atrial fibrillation, diabetes, and coronary artery disease. A team led by researchers from Queen Mary University of London has developed a new artificial intelligence (AI) tool that is able to automatically measure the amount of fat around the heart from MRI scan images. Using the new tool, the team was able to show that a larger amount of fat around the heart is associated with significantly greater odds of diabetes, independent of a person's age, sex, and body mass index. The research team invented an AI tool that can be applied to standard heart MRI scans to obtain a measure of the fat around the heart automatically and quickly, in under three seconds. This tool can be used by future researchers to discover more about the links between the fat around the heart and disease risk, but also potentially in the future, as part of a patient's standard care in hospital. The research team tested the AI algorithm's ability to interpret images from heart MRI scans of more than 45,000 people, including participants in the UK Biobank, a database of health information from over half a million participants from across the UK. The team found that the AI tool was accurately able to determine the amount of fat around the heart in those images, and it was also able to calculate a patient's risk of diabetes read the research published at https://www.frontiersin.org/articles/10.3389/fcvm.2021.677574/full read more at https://www.sciencedaily.com/releases/2021/07/210707112427.htm also at the QMUL website https://www.qmul.ac.uk/media/news/2021/smd/ai-predicts-diabetes-risk-by-measuring-fat-around-the-heart-.html

|

Scooped by

nrip

|

Patient travel history can be crucial in evaluating evolving infectious disease events. Such information can be challenging to acquire in electronic health records, as it is often available only in unstructured text.

Objective: This study aims to assess the feasibility of annotating and automatically extracting travel history mentions from unstructured clinical documents in the Department of Veterans Affairs across disparate health care facilities and among millions of patients. Information about travel exposure augments existing surveillance applications for increased preparedness in responding quickly to public health threats.

Methods: Clinical documents related to arboviral disease were annotated following selection using a semiautomated bootstrapping process. Using annotated instances as training data, models were developed to extract from unstructured clinical text any mention of affirmed travel locations outside of the continental United States. Automated text processing models were evaluated, involving machine learning and neural language models for extraction accuracy.

Results: Among 4584 annotated instances, 2659 (58%) contained an affirmed mention of travel history, while 347 (7.6%) were negated. Interannotator agreement resulted in a document-level Cohen kappa of 0.776. Automated text processing accuracy (F1 85.6, 95% CI 82.5-87.9) and computational burden were acceptable such that the system can provide a rapid screen for public health events.

Conclusions: Automated extraction of patient travel history from clinical documents is feasible for enhanced passive surveillance public health systems.

Without such a system, it would usually be necessary to manually review charts to identify recent travel or lack of travel, use an electronic health record that enforces travel history documentation, or ignore this potential source of information altogether. The development of this tool was initially motivated by emergent arboviral diseases. More recently, this system was used in the early phases of response to COVID-19 in the United States, although its utility was limited to a relatively brief window due to the rapid domestic spread of the virus. Such systems may aid future efforts to prevent and contain the spread of infectious diseases. read the study at https://publichealth.jmir.org/2021/3/e26719

|

Scooped by

nrip

|

Anticipating the risk of gastrointestinal bleeding (GIB) when initiating antithrombotic treatment (oral antiplatelets or anticoagulants) is limited by existing risk prediction models. Machine learning algorithms may result in superior predictive models to aid in clinical decision-making. Objective: To compare the performance of 3 machine learning approaches with the commonly used HAS-BLED (hypertension, abnormal kidney and liver function, stroke, bleeding, labile international normalized ratio, older age, and drug or alcohol use) risk score in predicting antithrombotic-related GIB. Design, setting, and participants: This retrospective cross-sectional study used data from the OptumLabs Data Warehouse, which contains medical and pharmacy claims on privately insured patients and Medicare Advantage enrollees in the US. The study cohort included patients 18 years or older with a history of atrial fibrillation, ischemic heart disease, or venous thromboembolism who were prescribed oral anticoagulant and/or thienopyridine antiplatelet agents between January 1, 2016, and December 31, 2019. In this cross-sectional study, the machine learning models examined showed similar performance in identifying patients at high risk for GIB after being prescribed antithrombotic agents. Two models (RegCox and XGBoost) performed modestly better than the HAS-BLED score. A prospective evaluation of the RegCox model compared with HAS-BLED may provide a better understanding of the clinical impact of improved performance. link to the original investigation paper https://jamanetwork.com/journals/jamanetworkopen/fullarticle/2780274 read the pubmed article at https://pubmed.ncbi.nlm.nih.gov/34019087/

|

Scooped by

nrip

|

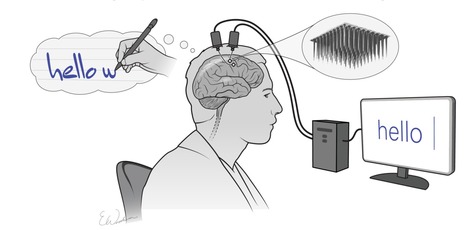

The combination of mental effort and state-of-the-art technology have allowed a man with spinal injury and immobilized limbs, to communicate by text at speeds rivaling those achieved by his able-bodied peers texting on a smartphone. Call it mindwriting. Scientists at Stanford University, Howard Hughes Medical Institute, and Brown University, developed an implanted brain-computer interface (BCI) technology that uses artificial intelligence to convert brain signals generated when someone visualizes the process of handwriting, into text on a computer, in real time. The team now reports on a trial in which a paralyzed clinical trial participant with a BCI implant was able to “type” words on a computer by merely thinking about the hand motions involved in creating written letters. The software effectively decoded the information from the BCI to quickly convert the man’s thoughts about handwriting into text on a computer screen. In the reported study, the 65-year-old male participant achieved a typing rate of 90 characters per minute, more than double the previous record for typing with a brain-computer interface. The goal is to restore the ability to communicate by text. As per Frank Willett, PhD, the first author of the paper, The system is so fast because each letter elicits a highly distinctive activity pattern, making it relatively easy for the algorithm to distinguish one from another, The new study is part of the BrainGate clinical trial, directed by Leigh Hochberg, MD, a neurologist and neuroscientist at Massachusetts General Hospital, Brown University, and the Providence VA Medical Center. The BrainGate collaboration has been working for several years on systems that enable people to generate text through direct brain control. While a major focus of BCI research has been on restoring gross motor skills, as the team further noted, “rapid sequences of highly dexterous behaviors, such as handwriting or touch typing, might enable faster rates of communication.” What wasn’t known, they pointed out, was whether “… the neural representation for a rapid and highly dexterous motor skill, such as handwriting, also remains intact.” Next, the team intends to work with a participant who cannot speak, such as someone with amyotrophic lateral sclerosis, a degenerative neurological disorder that results in the loss of movement and speech. In addition, they are looking to increase the number of characters available to the participants, such as capital letters and numbers. read the paper "High performance Brain-to-Text communication via handwriting" read the article in its entirety at https://www.genengnews.com/news/brain-computer-interface-converts-mental-handwriting-into-written-text/

|

Scooped by

nrip

|

New artificial intelligence technology that uses a common CT angiography (CTA), as opposed to the more advanced imaging normally required to help identify patients who could benefit from endovascular stroke therapy (EST), is being developed at The University of Texas Health Science Center at Houston (UTHealth). Two UTHealth researchers worked together to create a machine-learning artificial intelligence tool that could be used for assessing a stroke at every hospital that takes care of stroke patients - not just at large academic hospitals in major cities. Research to further develop and test the technology tool is funded through a five-year, $2.5 million grant from the National Institutes of Health (NIH). "The vast majority of stroke patients don't show up at large hospitals, but in those smaller regional facilities. And most of the emphasis on screening techniques is only focused on the technologies used in those large academic centers. With this technology, we are looking to change that," said Sunil Sheth, MD, assistant professor of neurology at McGovern Medical School at UTHealth. Sheth set out with Luca Giancardo, PhD, assistant professor with the Center for Precision Health at UTHealth School of Biomedical Informatics, to develop a quicker way to assess patients. The result was a novel deep neural network architecture that leverages brain symmetry. Using CTAs, which are more widely available, the system can determine the presence or absence of a large vessel occlusion and whether the amount of "at-risk" tissue is above or below the thresholds seen in those patients who benefitted from EST in the clinical trials. "This is the first time a data set is being specifically collected aiming to address the lack of quality imaging available for stroke patients at smaller hospitals," Giancardo said. read the complete press release with further details on the work at https://www.uth.edu/news/story.htm?id=9fccdefb-ff91-4775-a759-a786689956ea

|

Scooped by

nrip

|

“ATMAN AI”, an Artificial Intelligence algorithm that can detect the presence of COVID-19 disease in Chest X Rays, has been developed to combat COVID fatalities involving lung. ATMAN AI is used for chest X-ray screening as a triaging tool in Covid-19 diagnosis, a method for rapid identification and assessment of lung involvement. This is a joint effort of the DRDO Centre for Artificial Intelligence and Robotics (CAIR), 5C Network & HCG Academics. This will be utilized by online diagnostic startup 5C Network with support of HCG Academics across India. Triaging COVID suspect patients using X Ray is fast, cost effective and efficient. It can be a very useful tool especially in smaller towns in India owing to lack of easy access to CT scans there. This will also reduce the existing burden on radiologists and make CT machines which are being used for COVID be used for other diseases and illness owing to overload for CT scans. The novel feature namely “Believable AI” along with existing ResNet models have improved the accuracy of the software and being a machine learning tool, the accuracy will improve continually. Chest X-Rays of RT-PCR positive hospitalized patients in various stages of disease involvement were retrospectively analysed using Deep Learning & Convolutional Neural Network models by an indigenously developed deep learning application by CAIR-DRDO for COVID -19 screening using digital chest X-Rays. The algorithm showed an accuracy of 96.73%. read more at http://indiaai.gov.in/news/drdo-cair-5g-network-and-hcg-academics-develop-atman-ai

|

Scooped by

nrip

|

Since the start of the pandemic, new technologies have been developed to help reduce the spread of the infection. Some of the most common safety measures today include measuring a person’s temperature, covering your nose and mouth with a mask, contact tracing, disinfection, and social distancing. Many businesses have adopted various technologies, including those with artificial intelligence (AI) underneath, helping to adhere to the COVID-19 safety measures. As an example, numerous airlines, hotels, subways, shopping malls, and other institutions are already using thermal cameras to measure an individual’s temperature before people are allowed entry. In its turn, public transport in France relies on AI-based surveillance cameras to monitor whether or not people are social-distancing or wearing masks. Another example is requiring the download of contact-tracing apps delivered by governments across the globe. However, there are a number of issues. While many of these solutions help to ensure that COVID-19 prevention practices are observed, many of them have flaws or limits. In this article, we will cover some of the issues creating obstacles for fighting the pandemic. Issue #1. Manual temperature scanning is tricky Issue #2. Monitoring crowds is even more complex Issue #3. Contact tracing leads to privacy concerns Issue #4. UV rays harm eyes and skin Issue #5. UVC robots are extremely expensive Issue #6. No integration, no compliance, no transparency Regardless of the safety measures in place and existing issues, innovations are already playing a vital role in the fight against COVID-19. By improving on existing technology, we can make everyone safer as we all adjust to the new normal. read the details at https://www.altoros.com/blog/whats-wrong-with-ai-tools-and-devices-preventing-covid-19/

|

Scooped by

nrip

|

Two scientific leaps, in machine learning algorithms and powerful biological imaging and sequencing tools , are increasingly being combined to spur progress in understanding diseases and advance AI itself. Cutting-edge, machine-learning techniques are increasingly being adapted and applied to biological data, including for COVID-19. Recently, researchers reported using a new technique to figure out how genes are expressed in individual cells and how those cells interact in people who had died with Alzheimer's disease. Machine-learning algorithms can also be used to compare the expression of genes in cells infected with SARS-CoV-2 to cells treated with thousands of different drugs in order to try to computationally predict drugs that might inhibit the virus. While, Algorithmic results alone don't prove the drugs are potent enough to be clinically effective. But they can help identify future targets for antivirals or they could reveal a protein researchers didn't know was important for SARS-CoV-2, providing new insight on the biology of the virus read the original article which speaks about a lot more at https://www.axios.com/ai-machine-learning-biology-drug-development-b51d18f1-7487-400e-8e33-e6b72bd5cfad.html

|

Scooped by

nrip

|

People often turn to technology to manage their health and wellbeing, whether it is - to record their daily exercise,

- measure their heart rate, or increasingly,

- to understand their sleep patterns.

Sleep is foundational to a person’s everyday wellbeing and can be impacted by (and in turn, have an impact on) other aspects of one’s life — mood, energy, diet, productivity, and more. As part of Google's ongoing efforts to support people’s health and happiness, Google has announced Sleep Sensing in the new Nest Hub, which uses radar-based sleep tracking in addition to an algorithm for cough and snore detection. The new Nest Hub, with its underlying Sleep Sensing features, is the first step in empowering users to understand their nighttime wellness using privacy-preserving radar and audio signals. Understanding Sleep Quality with Audio Sensing The Soli-based sleep tracking algorithm gives users a convenient and reliable way to see how much sleep they are getting and when sleep disruptions occur. However, to understand and improve their sleep, users also need to understand why their sleep is disrupted. To assist with this, Nest Hub uses its array of sensors to track common sleep disturbances, such as light level changes or uncomfortable room temperature. In addition to these, respiratory events like coughing and snoring are also frequent sources of disturbance, but people are often unaware of these events. As with other audio-processing applications like speech or music recognition, coughing and snoring exhibit distinctive temporal patterns in the audio frequency spectrum, and with sufficient data an ML model can be trained to reliably recognize these patterns while simultaneously ignoring a wide variety of background noises, from a humming fan to passing cars. The model uses entirely on-device audio processing with privacy-preserving analysis, with no raw audio data sent to Google’s servers. A user can then opt to save the outputs of the processing (sound occurrences, such as the number of coughs and snore minutes) in Google Fit, in order to view personal insights and summaries of their night time wellness over time. read the entire unedited blog post at https://ai.googleblog.com/2021/03/contactless-sleep-sensing-in-nest-hub.html

|

Your new post is loading...

Your new post is loading...

In recent conversations with FIND and WHO we have mutually discussed the framework which needs to be defined and put in place for identifying effectiveness in AI tools for use in medicine at the provider level. The basic reasoning behind our conversations has been to ensure that the trust factor behind a particular AI technology being used in healthcare is created and then via regular monitoring maintained rather than simply buying into far fetched stories. This paper and several others on this topic will be highlighted here , and further on Plus91's medium blog we will put several frameworks together